- Bot Eat Brain

- Posts

- Can stable-diffusion read your mind?

Can stable-diffusion read your mind?

PLUS: Video Game NPCs get smart

Good morning brains,

Welcome back to Bot Eat Brain, the newsletter that’s here to feed your daily AI cravings, byte by byte.

Here’s what we’ve got today:

A new AI technique can access your imagination 🪄

Implications for brain research 🧪

Characters in games use GPT to talk 🎮

Stable Diffusion Model Peeks into Your Mind 🔍

Picture this - you're waiting for your cactus-flavored kombucha at the indie cafe downtown, and you can't quite figure your date out. Are they nervous? Waiting to get out? Or just thinking about what you're thinking? A group of brainy Japanese scientists built an AI model to find out… kinda.

Here’s how it works 🪄: researchers found a way to use Stability AI’s Stable Diffusion model together with brain scans to look inside your brain and map its activity to reconstruct images from it.

Presented Images:

Reconstructed Images:

They didn’t need fancy, complex deep-learning models. Just Stable Diffusion’s model from last year and an fMRI report. And the best part? These pics are crispy.

The method uses an image-encoding process that makes sure the reconstructed pictures maintain scary-good resolution. Stable Diffusion’s denoising comes in clutch with this.

High-Resolution Image Reconstruction with Stable Diffusion

Plus, the model also maps out different parts of the model to different regions of the brain. Unlike typical neural networks that run loose when spawned, this model can tell us exactly how it's connected to different brain functions.

What this means: researchers can now better understand how the brain processes information and develop targeted interventions to treat disorders. We also suspect it has the potential to one day revolutionize AI by helping us mimic the brain’s structure.

And it still doesn’t end there. They even figured out how the model can play around with text prompts that direct it while still maintaining the original image's appearance.

Sick of the crowd killing the vibes in your memory snapshots? Just put them mute.

What Directed Real-Time Image Reconstruction Could Look Like

Where This is Headed: short term, we see people with ALS and other neurological syndromes benefiting the most from immediate renditions of this tech. With Elon Musk’s brain implants making progress too, consider looking out for a dream-catcher shipping in a future iOS update (you heard it here first).

TLDR;

Japanese Scientists developed a method to reconstruct images from fMRI scans.

The model reconstructs high-resolution images with high fidelity without additional training or fine-tuning of complex deep-learning models.

The model links its interpretations to brain signals, helping scientists better understand what’s going on up there. It also allows for text-based direction while maintaining its high quality.

SPONSORED

Chop cloud bills in half with Salad 🔪

Need to generate 1000’s of images using stable-diffusion? Want to save money? Then keep reading…

Salad offers developers an easy-to-use and fully managed Inference API for stable-diffusion allowing developers to scale their inferences infinitely without worrying about configuring their infrastructure.

Benchmarking Salad against several other “one-click deployment” services reveals that Salad is the most cost-effective solution:

You can generate more than 1000 images per dollar and only pay for what you need. Easily scale from 10,000 images per hour, to zero, and back again.

The secret sauce: community cloud. SaladCloud is a fully people-powered alternative to traditional cloud services.

10,000+ consumer GPUs are available at any moment, offering high availability, top performance, and scalable container solutions for a fraction of the cost.

NPCs Just Got a Whole Lot Smarter 🎮

Hold onto your joysticks gamers, because NPCs just got a major upgrade. The medieval sandbox game Mount & Blade II: Bannerlord has just come out with ChatGPT conversation mods.

With its emphasis on player freedom and character likes/dislikes affecting the plot, players have been using the mods to have characters generate dialogue and actions that make sense.

Now, it isn’t perfect. In the clip above, for example, the text-load time between generated dialogue isn’t exactly publish-worthy.

Players also claim that interactions tend to come across as robotic and, for lack of a more creative description, AI-generated.

Our Take: When these problems inevitably get solved, AI will be a total game-changer for sandbox-style titles. Game manufacturers are already looking to use AI to replace traditional game development aspects and create more vivid, realistic NPCs.

Your daily munch 😋

Quick read:

Long read:

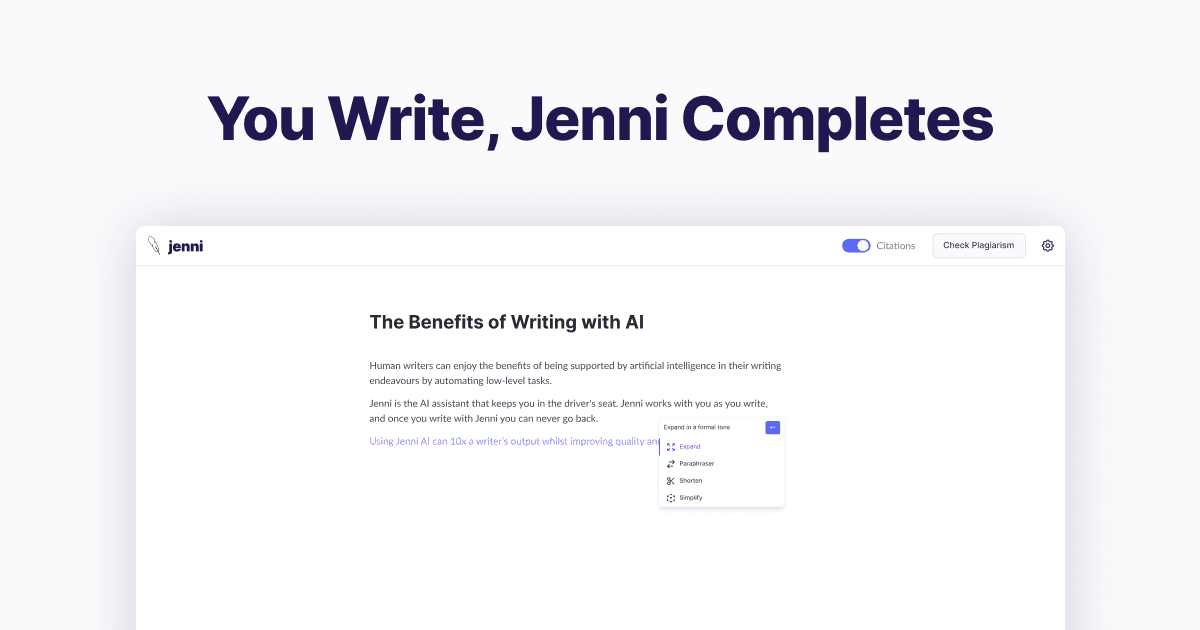

Tool of the Day:

Today's AI-Generated Art Show 🖌️

Until next time 🤖😋🧠

What'd you think of today's newsletter? |